Red Flags Raised

Concerns surfaced about the methodology used in Google’s benchmark comparisons for Gemini AI. Experts, like Bindu Reddy, raised doubts regarding the chosen technique (CoT@32) when compared to the standard 5-shot learning method commonly used in benchmarks like MMLU. This discrepancy cast a shadow on the legitimacy of comparing Gemini’s performance to GPT-4.

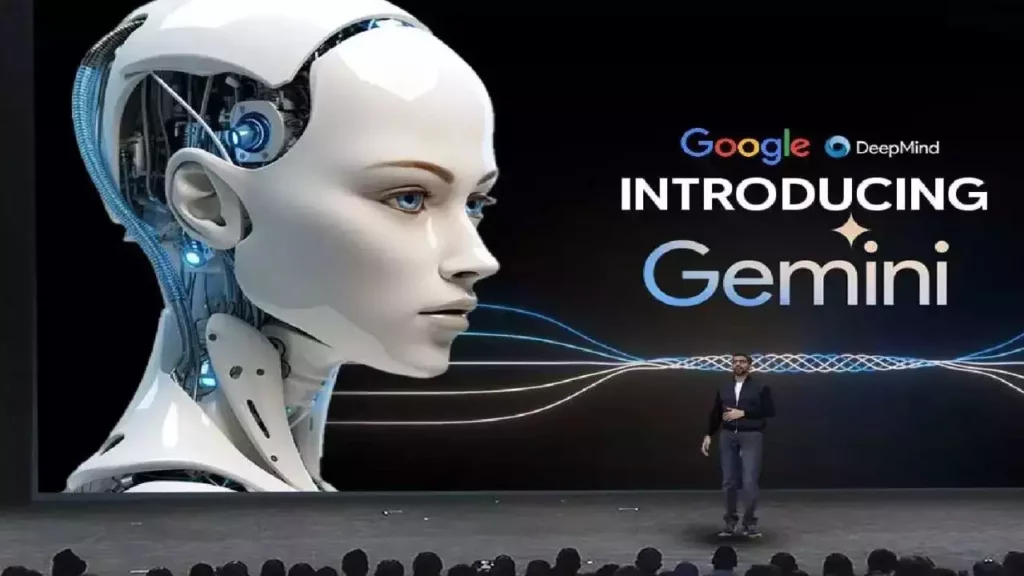

Video Accuracy Questioned

The authenticity of Gemini AI’s capabilities, as portrayed in the launch video, sparked debate. Clint Ehrlich, an attorney and computer scientist, argued that the video presented a misleading depiction, highlighting limitations like processing based on still images and text prompts instead of real-time interaction with the video content. This ignited concerns about the video’s adherence to regulations and its potential to mislead viewers about Gemini’s true capabilities.

Reports and Clarifications

Reports revealed discrepancies between the video’s representation and Gemini’s actual output. Google acknowledged that edited footage was used but maintained that the core functionalities showcased were genuine and condensed for brevity. They emphasized the video’s purpose to inspire developers and claimed the user prompts and outputs were real. However, the lack of disclaimers clarifying the editing process remained a sticking point.

Google’s Response and Clarification (December 2023)

In response to the controversy, Google clarified the video’s creation process. Oriol Vinyals, a prominent figure in Gemini’s development, stated that the video used sequences of images and text to predict future scenarios and inspire developer creativity.

He emphasized that the user prompts and outputs were real but condensed for brevity. Additionally, Vinyals argued that similar results could be achieved through regular user interactions with Gemini, albeit with inherent variability common in large language models.

Current Status (March 2024)

As of March 6, 2024, there haven’t been any major updates regarding the controversy surrounding the launch video. Google hasn’t announced a release date or details about public access for Gemini AI.

Looking Forward

The story of Gemini AI highlights the crucial role of transparency and responsible representation in AI development. The tech industry must prioritize clarity, acknowledge limitations, and adhere to regulations when communicating AI advancements. Only through such a commitment can we foster trust, ensure responsible development, and ultimately unlock the benefits of AI for all.

Google’s Duplex Controversy

It’s important to note that this isn’t the first time Google has faced questions about the legitimacy of its AI demonstrations. In 2018, the company’s Duplex demo showcased an AI assistant making restaurant reservations.

Was found to be staged and not a true reflection of the technology’s real-world capabilities. This past controversy serves as a stark reminder of the importance of responsible representation in AI demonstrations and the need to avoid misleading the public.

Conclusion

The controversy surrounding Google’s Gemini AI serves as a valuable case study, highlighting the delicate balance between showcasing the potential of new technologies and ensuring transparency. Moving forward, the tech industry must prioritize responsible representation and clear communication to build trust and foster the ethical development of AI for the benefit of society.